Jack Herrington has been shipping JavaScript for 40 years and educating developers on YouTube as the Blue Collar Coder. As a core contributor to TanStack, he’s now building TanStack AI, a type-safe, provider-agnostic SDK for adding AI to web apps. Its upcoming Code Mode feature shows what happens when you let agents orchestrate MCP tools with TypeScript.

This article is adapted from an MCP MVP interview with Jack Herrington, TanStack Core at Netlify.

In 2025, developers embraced the TypeScript stack for building AI-powered user-facing apps. Every framework has its own way of handling streaming, tool calling, and provider adapters (we’ve already covered some of the most popular ones in our documentation). The TypeScript community values an opinionated approach to building, and service providers are often all too happy to provide such tooling to lure prospective customers to their platform.

Jack Herrington has been working on a truly opensource, opinionated alternative (without the lock-in). Jack is a core contributor to TanStack known to hundreds of thousands of developers as the Blue Collar Coder on YouTube. He is building TanStack AI, the newest library in the TanStack ecosystem. It takes the same philosophy that made libraries like TanStack Query, TanStack Router, and TanStack Start popular (framework-agnostic, type-safe, no vendor lock-in) and applies it to AI.

“We’ve been really thoughtful about figuring out the ways that you want to extend or change the basic structure,” Jack told RL Nabors during an interview for Arcade’s MCP MVP series. “The very simple chat demo is about 20 lines of server code and 10 lines of frontend code. But we wanted flexibility for real use cases.”

How TanStack AI stands out among Agent Frameworks

TanStack AI provides a type-safe, provider-agnostic way to build AI features into web applications. Adapters for OpenAI, Anthropic, Gemini, and Ollama ship out of the box, with client libraries for React, Solid, and vanilla JavaScript. The core library handles streaming, tool execution, and agentic loops. It is framework-agnostic, true to TanStack’s philosophy of fitting into your existing stack rather than replacing it.

What makes it particularly interesting for developers working with MCP tools is that TanStack AI natively supports JSON Schema tool definitions. If your MCP server already exposes tools with JSON Schema, you don’t need Zod wrappers or conversion layers. Just pass the definitions in, and they work. Jack noted that this was a deliberate design choice that simplifies integration. It’s little touches and finesses like these that have helped TanStack win the hearts of React developers.

TanStack AI also supports the AG-UI protocol, an open standard for agent-to-frontend communication. This means if you have a backend in PHP or Python that speaks AG-UI, TanStack AI’s client libraries will work with it, making it the first TypeScript AI framework to ship with this cross-language interoperability.

TanStack AI supports adapters for image generation, text-to-speech, speech-to-text, and real-time audio (with support coming for vendors like OpenAI’s real-time API and ElevenLabs).

Tools can be isomorphic. Define them once with toolDefinition(), then implement them separately for server and client. Additionally, there’s built-in support for tool approval workflows that keep humans in the loop.

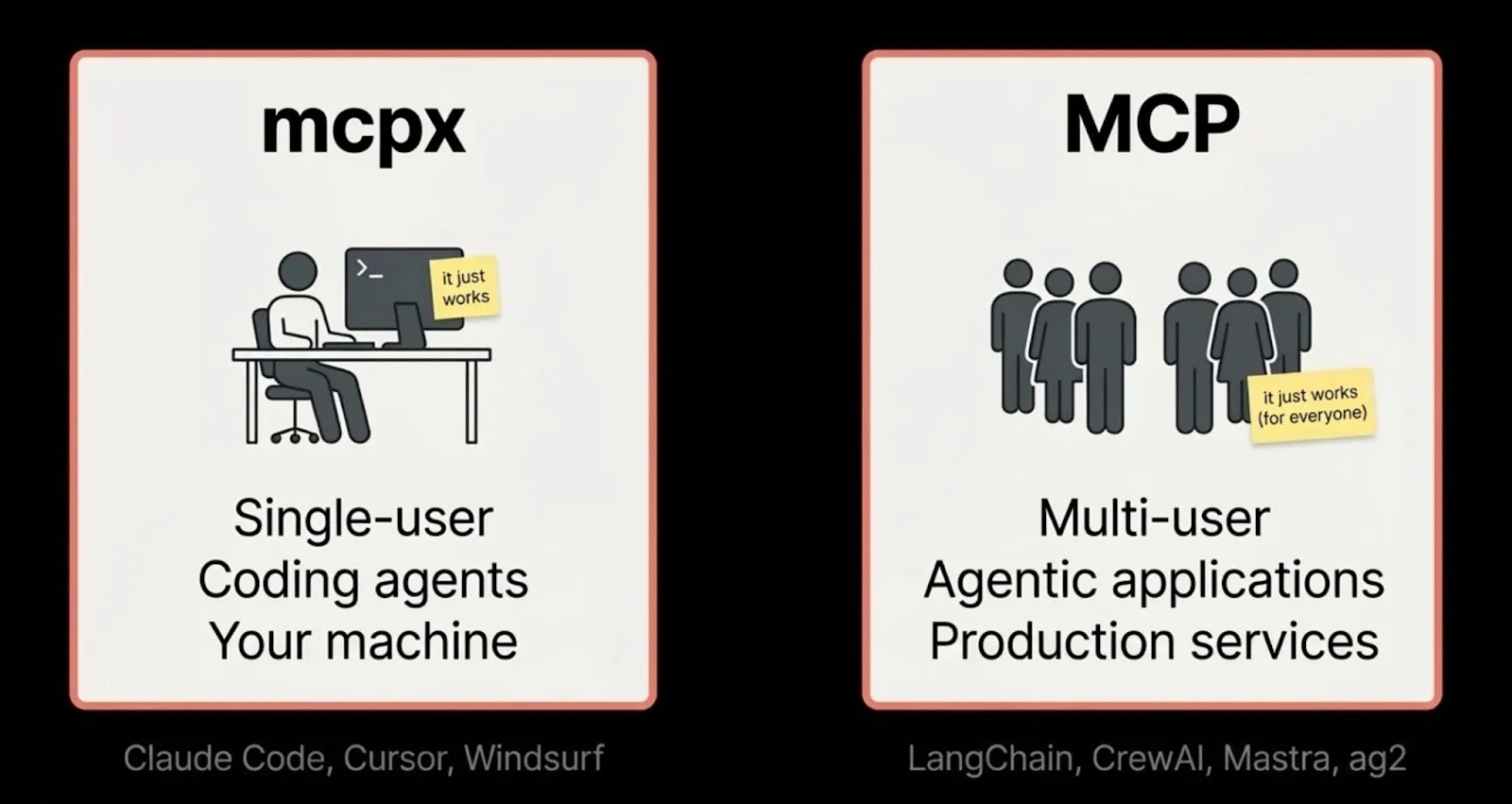

MCP tools without an MCP client

One of the strongest points in the conversation was how naturally MCP tools integrate with TanStack AI. Rachel-Lee built a chatbot using Arcade’s MCP servers with TanStack AI and was struck by how little glue code it required.

“You can just use your MCP client library to get your tools, pass them to TanStack AI, and it works,” Jack said. “The JSON Schema is the interface contract.”

This matters because it means TanStack AI doesn’t need to ship its own MCP client. The Model Context Protocol SDK handles the connection; TanStack AI handles the streaming, the agentic loop, and the UI. Each does what it’s good at.

Jack sees a future where pre-packaged tool modules become common. You could import a tmdb-tools package, and your agent would instantly know how to search movies. Or bring in a gmail-tools module, and it could read and send email. MCP servers like the ones Arcade provides are already there, offering authenticated access to services like Gmail, Slack, GitHub, and more, with the OAuth complexity handled for you.

Code Mode: when your agent needs to orchestrate

TanStack AI has a new feature in alpha that shows where tool orchestration is heading. It’s called Code Mode, and it addresses a specific problem anyone who’s built agentic workflows has encountered: what happens when one-at-a-time tool calling isn’t efficient enough?

Here’s the scenario. You ask an agent to compare download stats for a dozen packages, or unfollow 200 accounts, or audit every component in a design system. With standard tool calling, the LLM makes one tool call per round trip, each of which expands the context window and burns tokens. By iteration 47, the model starts getting lost.

In Code Mode, instead of calling tools one at a time, the agent writes a TypeScript program that orchestrates those tool calls, then executes it in a sandboxed environment. The LLM makes a single execute_typescript call that includes a loop that handles all iterations programmatically.

Jack demoed the difference live. A standard tool-calling query used 17K of context. The same query through Code Mode used 4K, with the agent making 12 internal tool calls inside a TypeScript loop it wrote itself. Same result, a quarter of the context, and the agent designed its own report structure in the process.

“It’s better at writing code than at looping through tool calls,” Jack said. “It’ll lose the plot if you make it call tools one by one. But writing the script to do it? Perfectly fine.”

How it works: you give Code Mode your external tools and an isolate driver (currently Isolated VM, with Cloudflare workers and Bun planned). Code Mode converts the tool definitions into TypeScript types, injects them into the sandbox, and gives the LLM a single tool: execute_typescript. Before execution, the code is compiled—and if it fails, the agent sees the TypeScript compiler error and self-heals, rewriting until it passes.

Code Mode orchestrates MCP tools rather than replacing them. Your MCP tools still provide the authenticated, guard-railed actions: send an email, query a database, post to Slack. Code Mode lets the agent write a script that calls those tools efficiently, rather than having the LLM narrate each step.

Code Mode and MCP tools together

Mateo from Arcade joined the conversation to dig into how Code Mode and MCP tools interact in practice. You decide which tools the LLM calls directly and which go inside the Code Mode sandbox. You can even put them in both.

It’s all about matching tool design to context. Your top-level LLM tools that the model calls directly should be conversational and descriptive, suited to a system that reasons in natural language. Your Code Mode tools can be tighter: API-style signatures with typed inputs and outputs, since they’re called by code, not in response to a natural-language prompt.

Security stays human-controlled. TanStack AI has built-in approval modes at both the direct tool-calling level and within Code Mode. When a sandboxed script tries to call a tool with real-world side effects, like sending money or publishing content, it emits an event to the client, which can approve or reject before execution resumes.

So instead of requiring approval for every action, route side effects into a drafts queue. The agent composes five emails; the human scans the list and approves, edits, or rejects each one. It’s faster than gating each one individually, and the agent receives feedback from rejections.

The reporting agent: generating UI on the fly

To show where this all leads, Jack demoed a “reporting agent” built on Code Mode. He asked it to find the hottest state management libraries. The agent generated a React UI in real time, rendering a custom report with interactive components, live in the browser. When the code broke mid-generation, the agent recognized the error, rewrote the component, and the report rebuilt.

He then shared a banking dashboard where the agent added a custom button with embedded TypeScript in its onPress handler. Later, pressing that button executes the code outside of any LLM conversation. It’s frozen TypeScript, running deterministically. No inference required.

“Think personal operating system,” Jack said. “Give me a dashboard on pork bellies, and it just builds it. Or my home automation app: give me a button that turns on the TV. And it just works.”

This points toward something product managers are starting to grapple with: when the UI can adapt to the user on the fly, you stop thinking feature-first and start thinking capability-first. What base capabilities does the system expose? What MCP tools are available? The LLM glues them together in whatever shape the user needs.

Skills as memory

Code Mode also ships with a skills library. When the agent writes a particularly good TypeScript routine, you can persist it. Next time a user asks for something similar, the agent pulls out the cached skill and executes it immediately. The context savings are significant: a complex routine that took 4K of context to generate costs nearly nothing to replay.

Like any cache, stale entries can be evicted. Jack floated an idea the team is exploring: using a higher-tier model, such as Opus, as a librarian sub-agent that reviews the skill library, finds near-matches, and augments existing tools on demand. The day-to-day work stays on Haiku, which Jack says is remarkably fast at both writing code and calling tools.

The conversation turned to a vivid analogy. Rachel-Lee shared their experience building systems of notes and transcription to compensate for a spate of short-term memory loss from COVID. Rachel-Lee would wake up every morning with little memory of the day before. Using this system, Rachel-Lee could pass notes to their future self and still function effectively.

It’s a useful framing for anyone building agent systems. LLMs don’t have linear memory. They wake up fresh every context window. Code Mode’s skills are one form of persistence. MCP tools are the capabilities it reaches for. The two work together: skills teach the agent how to reason about a task; tools give it deterministic actions to execute.

Try it yourself

The fastest way to see TanStack AI working with authenticated MCP tools is Arcade’s step-by-step tutorial: Build an AI Chatbot with Arcade and TanStack AI. You’ll wire up a TanStack Start app with Arcade’s Gmail and Slack tools, handle OAuth authorization automatically, and have a working chatbot in minutes.

To get started with TanStack AI on its own:

npx @tanstack/cli create my-app

TanStack AI Code Mode will launch as an alpha—the first TypeScript AI library to offer code-based tool orchestration with external tool injection. For feedback and discussion, join the TanStack AI channel on Discord. For bugs and feature requests, file issues on the GitHub repo.

Find Jack on YouTube as the Blue Collar Coder, on Bluesky as @jherr.dev, on X as @jherr, and on GitHub. His site is jackherrington.com.

MCP MVP is a video series from Arcade with RL Nabors that spotlights the builders shaping the agentic ecosystem. Watch the full interview with Jack Herrington →

Want to give your agents authenticated access to APIs without managing tokens yourself? See how Arcade handles OAuth for MCP tools.